Automated dependency testing with canary builds

It is increasingly common to use external dependencies and packages in projects as this can cause gaping security holes when not managed properly. We often have a hard time figuring out the right balance between regular updates versus stability when dealing with package dependencies in our environment. On the one hand, there is the “latest and greatest” approach where you try to keep up-to-date with the latest library versions. This is time-consuming and often breaks builds because new features are introduced and interface contracts change, requiring an update in the code base. On the one extreme, there is the “don't fix what isn’t broken” approach. Package versions are pinned forever and only updated if something stops working. This is undesirable from a security perspective as security patches should be applied quickly. Both scenarios are problematic!

A lot of open source components do not provide security patches for older package versions, instead, they bundle security patches with new feature releases. As a result, there is no other choice than to upgrade to the latest package version, which forces an adjustment to the code to match any new interfaces, instead of just a small security patch. Keeping the latest dependencies meanwhile doesn’t work either, as packages often have deep dependency chains. You cannot update one without updating them all, which requires every vendor or developer that publishes packages to release updates in-sync. Managing all of this complexity is time-consuming and cumbersome - especially when the number of projects and repositories explode when a single monolith is broken into hundreds of microservices.

One approach that provides some relief from managing dependencies and helps to find a better stability-security balance is the introduction of Canary builds. The idea is to use the CI/CD infrastructure to update packages automatically and then run code builds and tests. Pipelines can be scheduled to automatically update packages prior to the build phase, using one of two strategies: cutting edge or safety first. The cutting edge strategy involves upgrading to the very latest package versions, whereas the safety first approach upgrades only to the latest patch/minor version. A cutting edge strategy is the simplest to implement and nice to have but often introduces breaking changes and incompatibilities. Implementing a safety first approach requires package publishers to use semantic versioning and publish fixes for previous package versions, for example using HighestMinor parameter for NuGet, or pin major versions with semantic versioning for npm (using this calculator as a help).

Regardless of the strategy chosen, we use our existing unit tests to validate the behaviour of new packages. If everything works as expected, we automatically commit the new package versions back to our master branch. If something breaks along the way, often due to deep dependency trees, we simply try again at the next scheduled run. Often problems resolve themselves over time! If something keeps failing to upgrade for extended periods of time, our developers can investigate the issue and manually update code to match new interfaces.

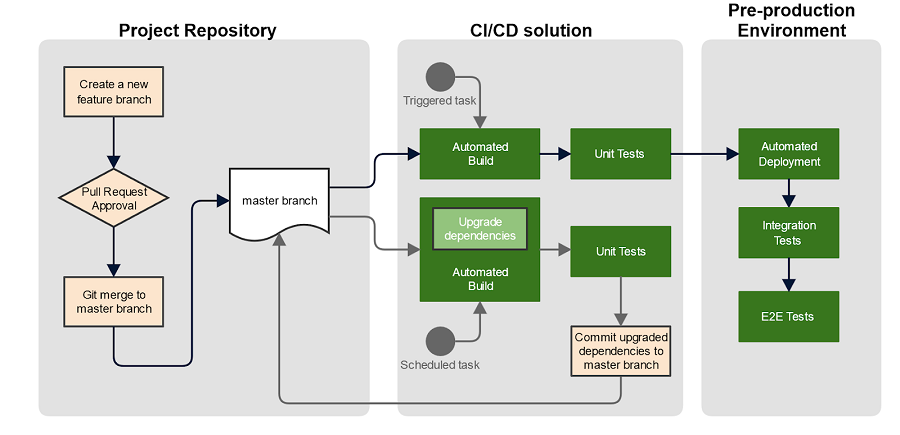

Our typical pipeline workflow for canary builds looks like this:

The effectiveness of this method is proportional to the test coverage of the code - unit, integration, and end-to-end tests help shift the balance towards stability as you can be confident new package versions haven’t regressed the behaviour of your applications.

You can adjust the frequency of canary builds to suit your risk appetite. There is no point attempting to trigger package updates every time there is a change on a branch, so scheduled canary builds work best. We run them daily to ensure we receive all the security updates in a timely manner with minimal manual intervention. You can adjust this to suit the release schedule of your own software and the packages you depend on.

We keep a history of package version updates through git commits and commit messages, so we can audit what was changed and when.

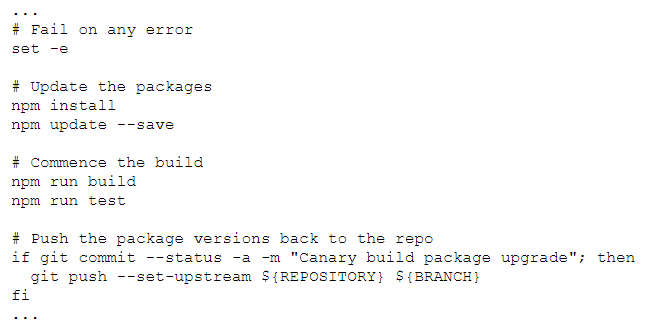

Here is a sample script that updates all npm packages to the latest version. After packages are upgraded, a regular build is commenced with all the tests. If all tests succeed, the package upgrades are considered successful and commit back the changes:

Nexus

There are often valid reasons to blacklist certain packages from upgrades, so it is recommended to build this into your process. For example, a particular package upgrade may be blocking canary builds, preventing other updates from going out; or a package may break functionality that is not caught by your test suites; or a package may have known bugs, vulnerabilities, or licensing concerns.

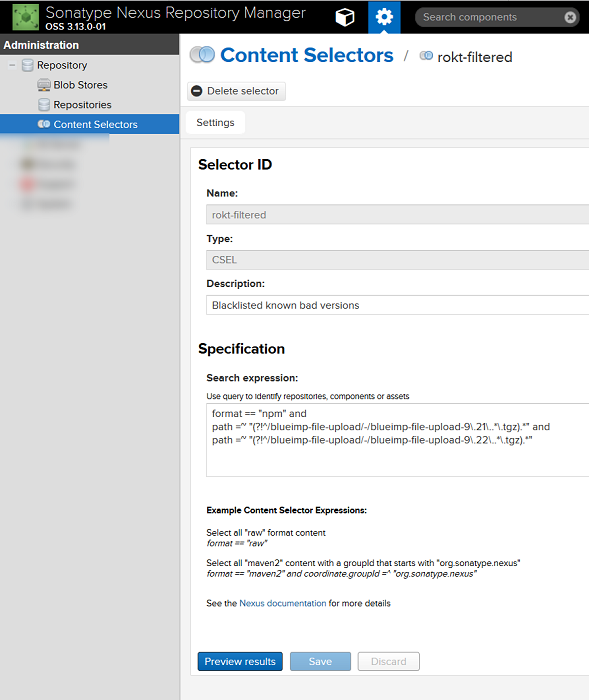

We rely on Nexus to help us manage this. Nexus is a repository compatible with almost all package managers available. While canary builds keep packages updated, Nexus will make sure unwanted packages will not be used. We have set up Nexus to act as both a repository for our own packages, as well as a proxy for all external repositories. We additionally use it to block any particular package from being downloaded by the build agent's packet manager client.

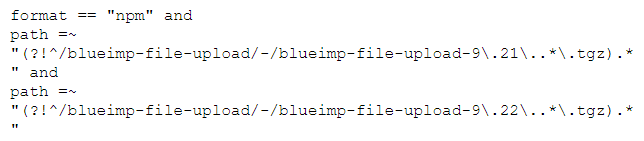

Take certain versions of the jQuery File Upload package for example, which are known to be vulnerable and should not be anywhere near our code. Nexus content selectors can be used to restrict the access to particular versions by matching package names using nexus CSEL language:

Here's how to do it.

First, create a content selector:

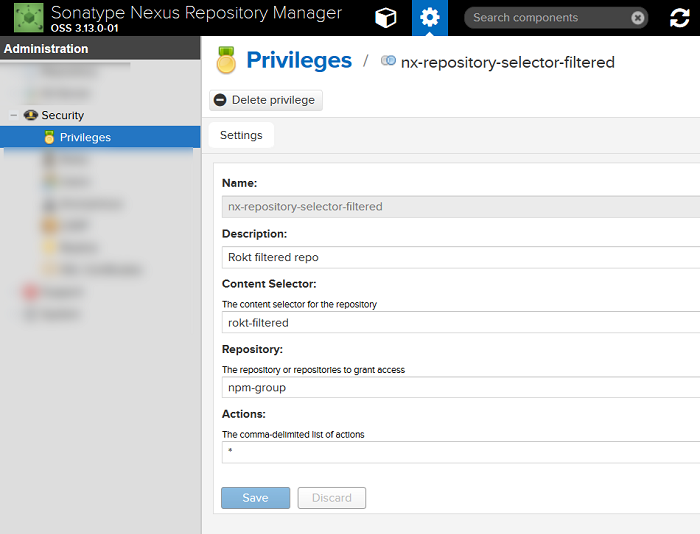

Next create a privilege to access this selector:

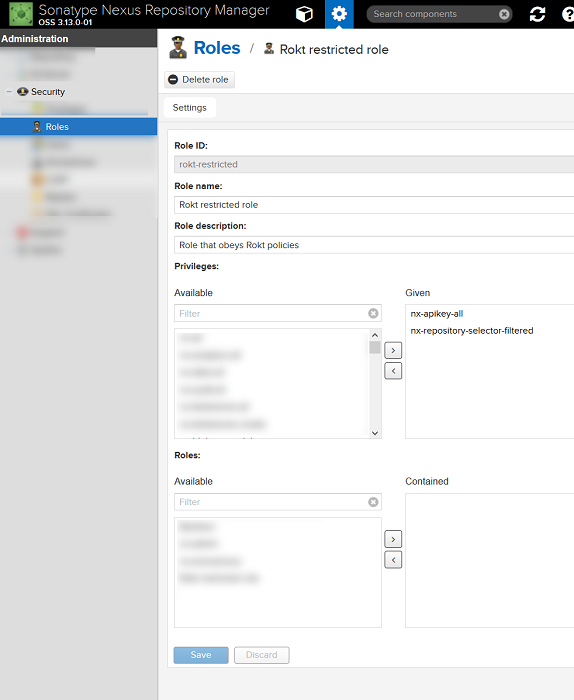

Then apply it to the build agent's Nexus account role:

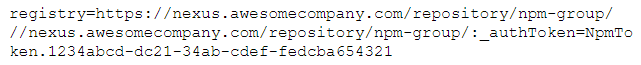

Disable anonymous access to the repository and configure the package management client to use Nexus as the global repository. In the case of npm, this can be done on a per-user or per-project basis. Create a .npmrc file in the home folder of the user (for 'per-user 'settings) or in the project's root folder, for example:

How this can be improved

There are several third-party packages available that manage the versioning and update process, such as npm-check-updates or NuKeeper. Other packages and services help to identify only those packages with security vulnerabilities, such as nvd-cli or Snyk.io. We currently run only unit tests against upgraded packages. The next step for our CI/CD evolution would be to create disposable environments during the build phase, thus allowing us to perform full integration or E2E tests against canary builds to gain further confidence that there are no code regressions.